- #Install apache spark on ec2 security group manual#

- #Install apache spark on ec2 security group upgrade#

(Default )įalse: Warn if the version information stored in metastore doesn 't match with one from in Hive jars. You can add your spark job as step to the cluster.

#Install apache spark on ec2 security group upgrade#

Users are required to manually migrate schema after Hive upgrade which ensures True: Verify that version information stored in metastore matches with one from Hive jars. Username to use against metastore databaseĮnforce metastore schema version consistency.

Jdbc:mysql://host-name:3306/hive?createDatabaseIfNotExist = true

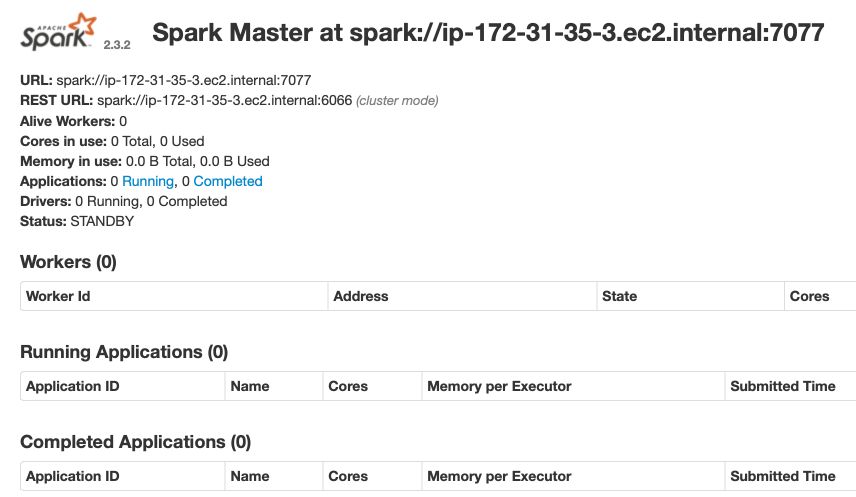

Password to use against metastore database fs. SESSION-ACCESS-KEY fs. SESSION-SECRET-KEY fs.s3a.endpoint s3.$ If users need to use other versions for deployment, you can replace them by yourself and ensure the compatibility between component versions.ĭeployment process 1 Configure environment variables The component version information provided here is that we selected during the test. Create Amazon RDS for MySQL as kylin and hive metabases.Apply for AWS EC2 Linux instances as required.Remove hadoop can be closer to the cloud-native.Īfter realizing the feature of supporting build and query in Spark Standalone mode, we tried to deploy Kylin 4.0 without Hadoop on the EC2 instance of AWS, and successfully built the cube and query. Hadoop ecology is heavy and needs to be maintained at a certain labor cost. On the EC2 node, users can more independently select the services and components they need for installation and deployment.ģ. Compared with AWS EMR node, AWS EC2 node has lower cost.Ģ. However it is more useful in distributed environment. Configuration is a mandatory installation requirement. Login to new EC2 instance using ssh ssh -i aws-key.pem ubuntu172.31.58.109 2. Choose the default security group to make sure that it can access your EFS file system. The Spark EC2 script automatically creates a separate security group and firewall rules for running the Spark cluster. Select reate a new security group checkbox. 6: Configure Security Group, set Assign a security group to Select an existing security group.

#Install apache spark on ec2 security group manual#

Clone the Pegasus repository and set the necessary environment variables detailed in the ‘ Manual ’ installation of Pegasus Readme. Compared with deploying Kylin 3.x on AWS EMR, deploying kylin4 directly on AWS EC2 instances has the following advantages:ġ. Name your instance and choose Next: Configure Security Group. There are a lot of topics to cover, and it may be best to start with the keystrokes needed to stand-up a cluster of four AWS instances running Hadoop and Spark using Pegasus. Compared with Kylin 3.x, Kylin 4.0 implements a new Spark build engine and parquet storage, making it possible for Kylin to deploy without Hadoop environment.